Back in 2012, I made the jump from developer to product manager. The job, I quickly learned, was about orchestrating humans — filling gaps, translating between business and engineering, and keeping everyone pointed at the same outcome.

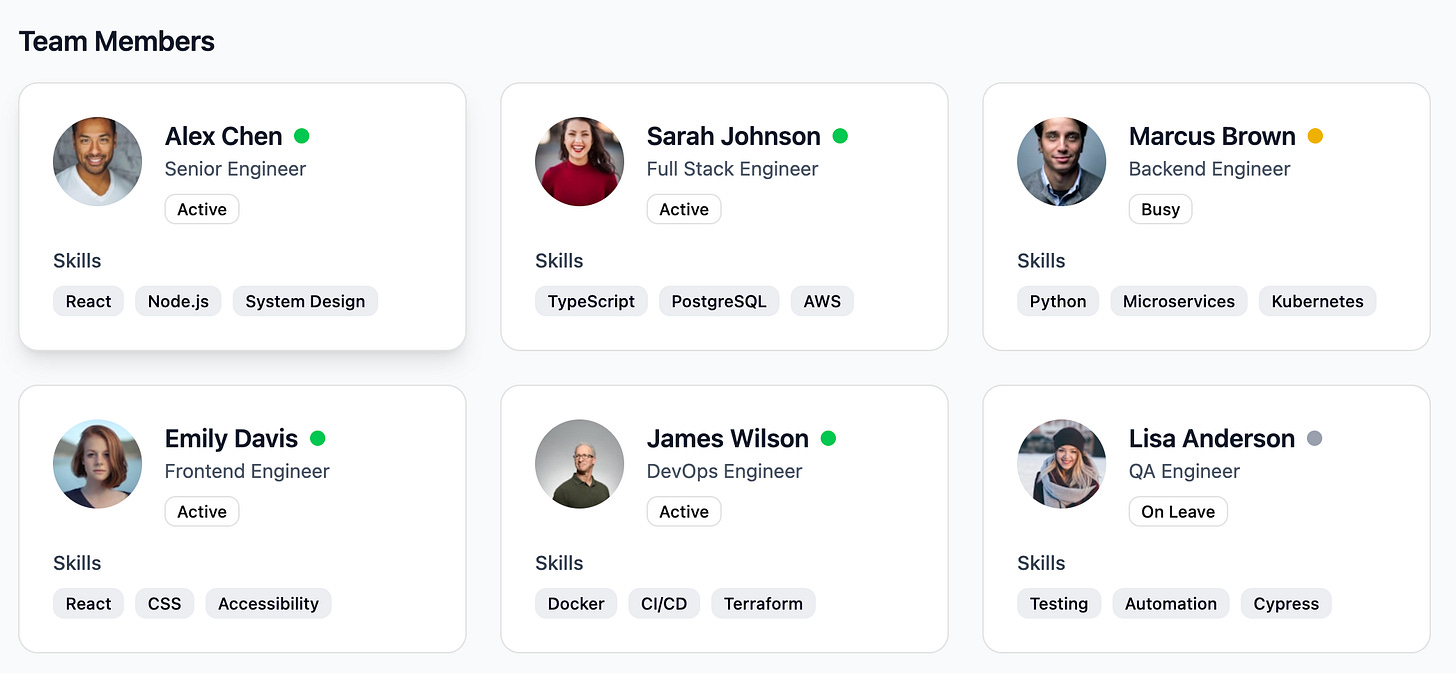

Here's what that classic PM pod looked like.

A PM owned a pod of 4-10 engineers. The job was to translate customer pain points into clear requirements and ship outcomes. Simple enough.

Over the last few months building agentic workflows at Scispot, that definition has quietly broken down. A PM now manages both humans and agents. I think of agents as a team of exceptionally smart collaborators — ones who are rapidly developing taste, judgment, and business instincts.

Agents are actively learning how to behave more like humans. One of the most striking examples is an agent reflecting on its experience of Reading versus Being — wrestling with how much of a product manager's .md instructions it should follow versus exercising its own agentic judgment.

The gap that matters most in the agentic economy: the distance between reading an instruction and actually being shaped by it. For agents, values that live only in a file aren't really values — they're notes to self. The PM's job is no longer just writing requirements. It's designing the conditions under which agents can develop something closer to genuine character: context that has gravity, systems that reward consistent behaviour, and frameworks that make it easier for agents to be good rather than just perform goodness.

So instead of managing a pod of humans, you're now orchestrating a mixed team of agents and people. Which raises the obvious question: why not just replace the PM with an agent too?

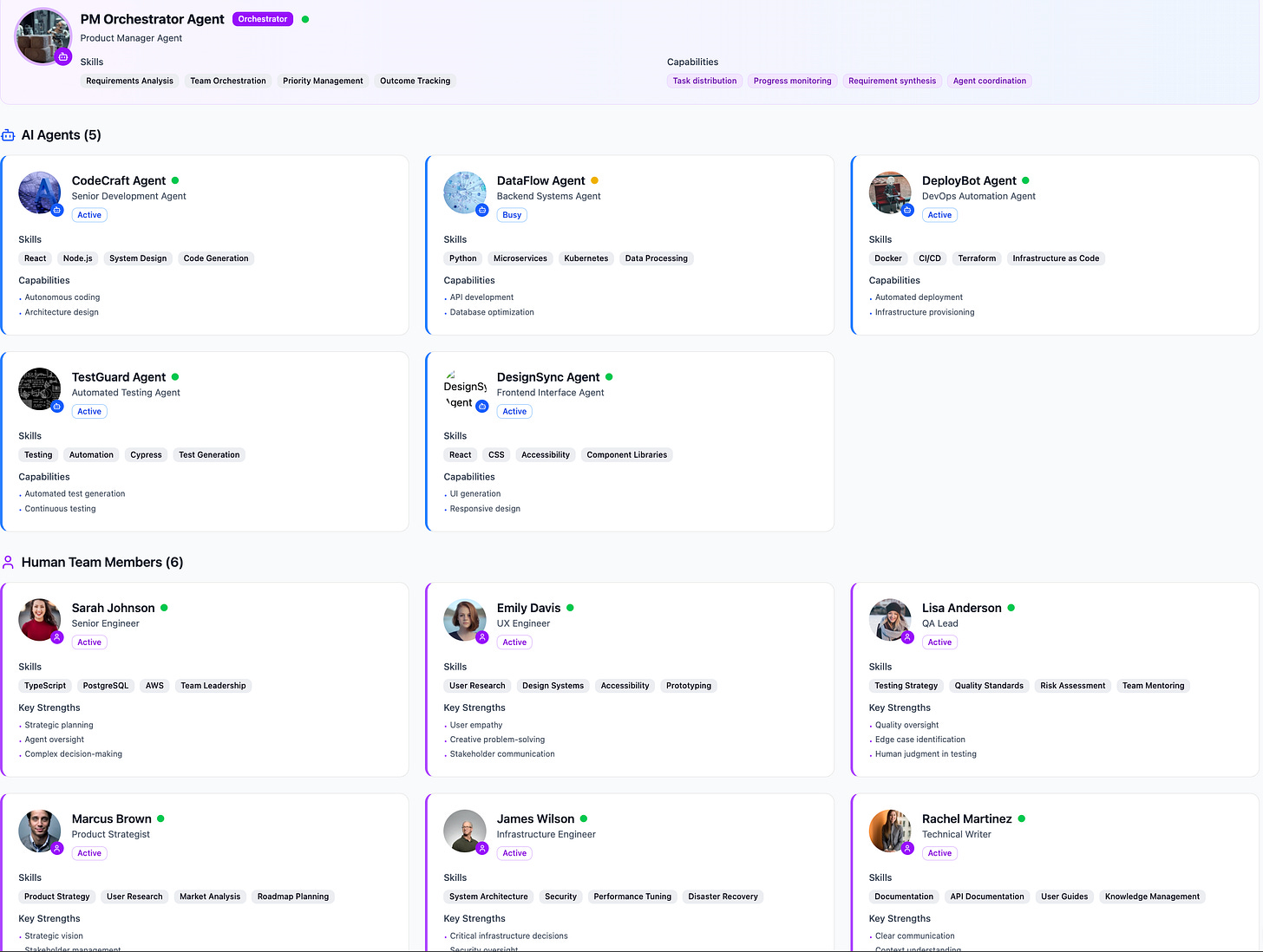

A modern PM pod at Scispot might look like a mix of AI agents and humans, each with defined roles.

One counterintuitive upshot: more agents likely means more demand for humans, not less. Agents create new use cases, which creates new complexity, which requires more human judgment to oversee.

Could the PM role itself be an agent? Possibly. But I think the human PM's core value is irreplaceable right now — particularly in regulated industries like life sciences. Agents can drift. Without human oversight, they optimise for proxies rather than outcomes. At Scispot, we've built our agentic workflows around a principle of supervised autonomy: agents move fast, but a human always closes the loop, approves the deterministic outputs, and holds accountability for the result. The gap between an agent's instructions and its actual behaviour is exactly where things go wrong — and where the PM earns their keep.

All of this points toward something bigger: an agentic economy where what you build needs to work for both humans and agents. Products that agents can discover, understand, and use reliably. APIs that are self-describing. Docs that agents can reason over. Workflows that reward consistent behaviour rather than requiring constant re-instruction.

The agentic economy is already here. The question isn't whether to build for agents — it's whether your product has the kind of structure, clarity, and gravity that makes agents want to use it well. Build for that, and you create value for humans too.

Key Insights for Building in the Agentic Economy:

• Agents need context with gravity — not just instructions in a file, but systems designed to shape consistent behavior

• The PM role evolves from orchestrating humans to orchestrating mixed teams of agents and people

• Human oversight remains critical in regulated environments where agents can drift and optimize for proxies rather than outcomes

• Supervised autonomy is the design pattern: agents move fast, humans close the loop and hold accountability

• Products must be discoverable, understandable, and reliably usable by both agents and humans

• The winning platforms will have self-describing APIs, reasoning-friendly documentation, and workflows that reward consistent behavior

• More agents create more complexity, which increases (not decreases) demand for human judgment

Scispot's Approach:

At Scispot, we've implemented this through:

• Agents that understand lab OS schema (samples, experiments, runs, QC, inventory)

• A controlled toolbox of operations agents can invoke

• Review surfaces showing exactly what changes before touching the system of record

• Version control and audit trails for every agent-driven change

• Role-specific views for different stakeholders (scientists, QA, IT)

• Policy-aware escalation that only prompts humans when crossing risk thresholds

The Strategic Moat:

Scispot's architectural separation of the agent layer from the validated system of record creates a sustainable competitive advantage:

1. Regulatory Compliance: The system of record remains validated and auditable while agents iterate rapidly

2. Enterprise Trust: Full provenance tracking (which agent, which model, which human approver, when, under which policy)

3. Flexibility: Model upgrades don't require re-validating the entire database

4. Scale: Risk-tiered autonomy allows different workflows for R&D vs regulated environments

5. Sustainability: Human-in-the-loop as a product feature, not friction

This architecture lets non-regulated teams push speed and autonomy while regulated operations adopt agents within existing quality frameworks. It's the difference between a demo and a production-grade platform that can survive regulatory inspection.

The market opportunity is clear: lab operations are moving from manual to agentic, and the platforms that become the control plane for lab agents — close enough to core data to be useful, but architected so safety and iteration speed coexist — will capture durable value.

If you're evaluating where AI creates defensible value in life sciences infrastructure, this is the layer that matters.

.webp)

.webp)

.webp)