How should CSV testing be performed?

You just spent two hours cleaning a huge customer spreadsheet. Then you click “upload” in your new system, and all you get is a vague “Import Failed” message. Finding one broken row in thousands can feel impossible. That kind of mess usually starts when teams rely too much on manual checks.

Dirty data wastes time every day. It also creates bigger problems than lost hours. Bad imports can break email campaigns, damage customer records, and derail a project because of one file issue that should have been caught earlier.

The good news is that you do not have to hunt for hidden commas or extra spaces by hand. Modern CSV testing tools work like spell-check for data. They catch format issues before the file hits your system, which makes uploads a lot less stressful.

Why your Excel habits might be breaking your CSV files

You may spend hours making a spreadsheet look right, then watch it break the moment you export it. Excel files are built for people to read and edit. A CSV is just plain text arranged in rows and columns. It drops the colors, formulas, highlights, and other formatting, and keeps only the raw values separated by commas.

That is why a date that looked fine in Excel can turn into a strange number, or a currency symbol can disappear. Excel often hides the real value behind formatting. CSV does not. When you compare CSV vs Excel for data testing, that difference matters. CSV files ignore the visual layer, so hidden problems show up fast.

Trying to catch those issues by eye is slow and unreliable. CSV quality checks need more than scrolling through rows and hoping nothing is off. You do not need deep technical skills to handle this well. You need a system that checks the file for you.

How automated data validation keeps bad data out

Manually scanning rows for one missing letter or one broken field is tiring and easy to get wrong. Modern data testing tools handle that work much faster. They can scan thousands of rows in seconds and flag issues right away.

A good way to think about this is a bouncer at the door. You give the tool a clear list of rules. If a value does not match the rules, it does not get in. That means the problem shows up before it causes a failed upload or broken record later.

These rules can be simple. A zip code must contain only numbers. An email must include “@”. A required field cannot be empty. Once those checks are in place, CSV validation becomes part of the routine instead of a last-minute scramble.

The hidden problems that break imports

Even if your data looks fine, the file structure can still cause trouble. CSV files use commas to separate columns. So if you have a name like “Smith, John” and it is not handled properly, the file may treat that comma as a divider and shift the rest of the row out of place.

Encoding is another common problem. If you have ever seen “René” turn into “René,” you have seen an encoding issue. Testing encoding and special characters ahead of time helps keep names and symbols intact.

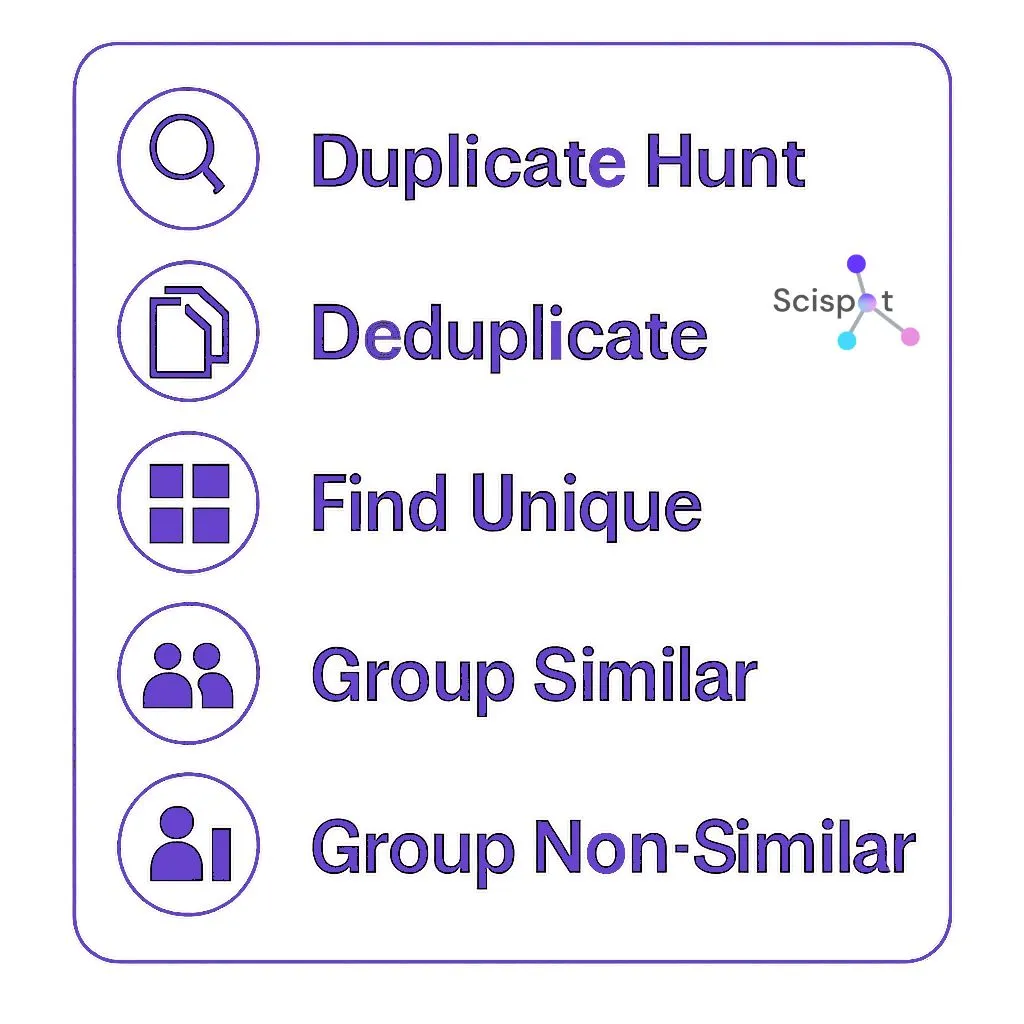

Duplicates matter too. A repeated row can lead to duplicate emails, repeat shipments, or messy reports. A good testing tool catches those duplicates right away, which saves money and avoids awkward mistakes.

These issues are hard to spot by hand, especially in large files. That is why automation helps. The real win is not just speed. It is trust.

Choosing a tool for large CSV files

Large spreadsheets can freeze your screen or crash your laptop. If you need to validate big CSV files, it often makes more sense to use a tool built for that job. Cloud-based platforms can scan large files without draining your machine.

The right tool depends on how much data you deal with:

Browser-based checkers work well for small files under 1,000 rows. They are fast and often free, but they may not be the best fit for sensitive data.

Desktop apps are useful for medium-sized jobs. They keep files offline, which can help with privacy, but they may struggle with larger scans.

Enterprise platforms are built for very large datasets. They usually offer stronger security, more rules, and better scale, though they come with a subscription cost.

Some paid tools also compare multiple CSV files at once. That helps when you need to see what changed between one export and the next without checking row by row.

Should you use code or a point-and-click tool?

Some IT teams use Python libraries to validate data. That means they write scripts to check files against custom rules. It can be powerful, but it is not always practical for day-to-day work.

Free and open source tools can sound appealing, but many of them depend on command-line use. That means typing instructions into a terminal instead of using a visual interface. For most teams, that adds friction to a task that should be simple.

Point-and-click tools make more sense for many business users. They give you strong validation without making you learn code or wait for a developer every time you need to test a file.

A 5-step checklist for every CSV import

A short routine before each upload can prevent a lot of trouble. The goal is to make sure the file matches the structure your system expects.

Here is a simple checklist:

- Header check

Make sure column names match exactly. - Data type check

Confirm dates, numbers, and text fields are in the right format. - Empty cell scan

Find missing required values. - Duplicate check

Catch accidental repeat rows. - Encoding test

Make sure special characters display correctly.

It also helps to compare the current file with the last one when needed. That way you only process what changed instead of rechecking everything from scratch.

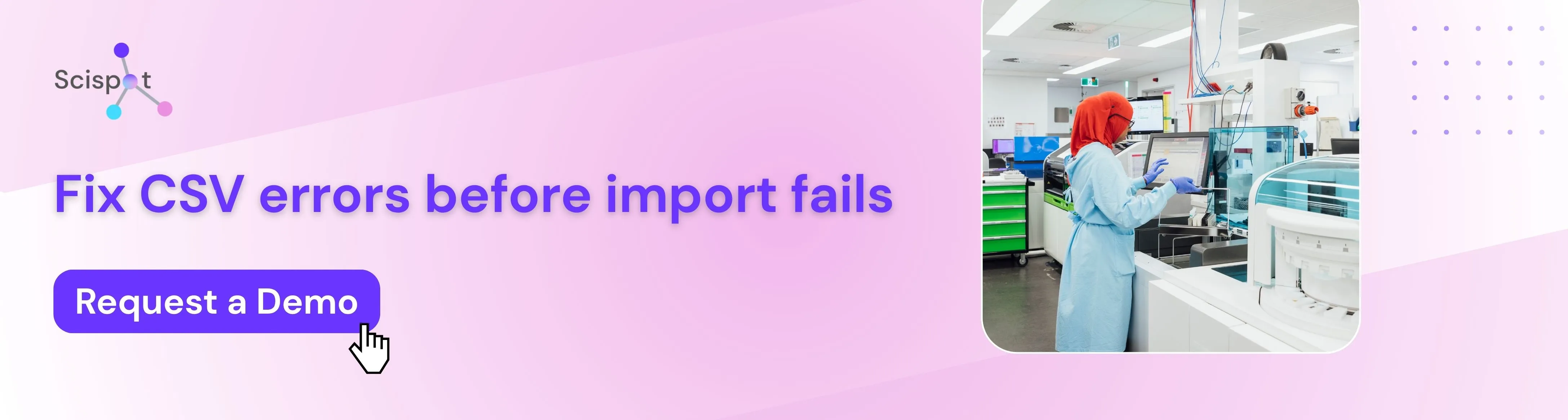

Why teams choose Scispot for CSV validation

Scispot works well for CSV testing because it does more than catch file errors. It helps teams build a cleaner, more reliable workflow around uploads and structured data.

Instead of treating validation like a one-time cleanup step, Scispot helps teams standardize data, catch format issues early, reduce manual review, and keep records consistent across systems. That becomes more important as teams grow and manage larger customer, lab, or operational datasets. One broken import can turn into reporting issues, failed workflows, or lost time.

With a modern interface, configurable rules, and support for structured data at scale, Scispot gives teams a practical way to test and trust their CSV imports without turning every upload into a technical mess.

From failed uploads to a cleaner workflow

You do not have to dread the “Import Failed” message. With the right validation process, you move from guessing whether a file will work to knowing it is ready.

A simple place to start is this: pick a tool, define your rules, and test one file before upload. That small habit catches the hidden issues that usually waste the most time.

Try it with one messy file you already have. You will likely spot problems you would not have seen by scrolling. Over time, that shift leads to fewer errors, less rework, and a much calmer workflow.

.webp)

.png)

.webp)

.webp)